Your Guide to Production-Ready AI: From Simple Prompts to Complex Business Agents

Your Guide to Production-Ready AI: From Simple Prompts to Complex Business Agents

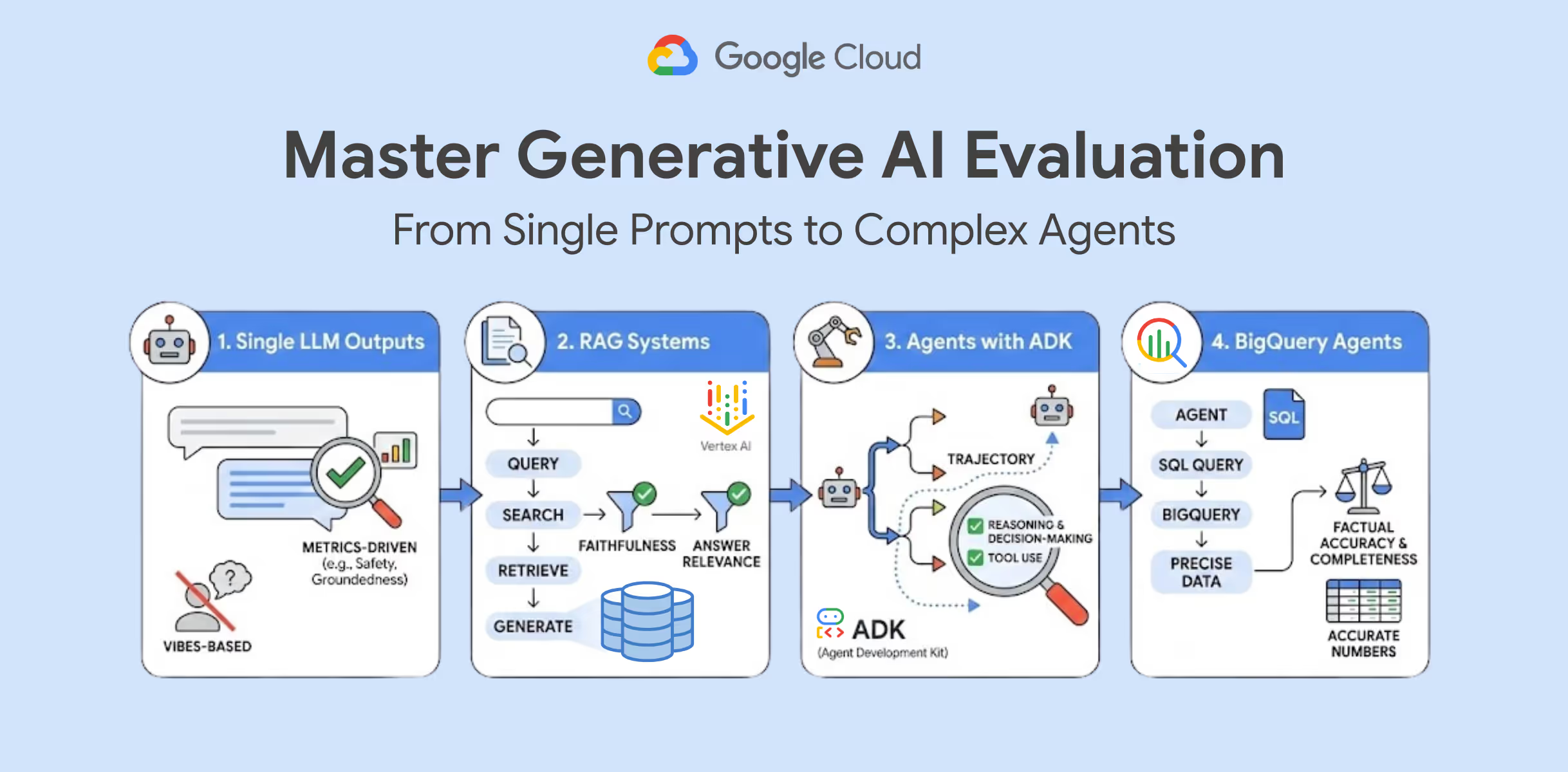

Building AI applications has never been easier, but there's a crucial gap between impressive demos and systems you can trust with real business decisions. Google Cloud's latest guide reveals how to bridge that gap through systematic evaluation—moving beyond "does it look right?" to rigorous, data-driven assessment.

Why Evaluation Is Your AI's Missing Link

The difference between a prototype and production isn't just scale—it's confidence. When your AI agent generates SQL queries for financial reports or your RAG system answers customer questions, "pretty good" isn't good enough. You need to know exactly when and how your system might fail.

Google Cloud's GenAI Evaluation service transforms this challenge into a systematic process, measuring safety, groundedness, and instruction-following across your entire AI pipeline.

Four Critical Evaluation Strategies for Business AI

Single Prompt Testing: Start with the fundamentals—automated evaluation of individual model outputs using defined metrics. This foundation catches basic issues before they compound in complex systems.

RAG System Validation: Since retrieval-augmented generation involves multiple failure points, you need specialized metrics like "Faithfulness" (accuracy to source material) and "Answer Relevance" (actually addressing user questions).

Agent Trajectory Analysis: Complex agents make dynamic decisions, choosing tools and planning steps based on input. The Agent Development Kit (ADK) helps you evaluate not just final outputs but the reasoning path—crucial for autonomous business systems.

Data-Connected Agent Precision: When agents query business databases, hallucinations become operational risks. Specialized evaluation ensures SQL-generating agents produce syntactically correct queries with factually accurate results.

Making Evaluation Actionable

These aren't theoretical concepts—Google Cloud provides hands-on labs for each evaluation approach, from basic prompt testing to advanced agent assessment. The full curriculum is part of their Production-Ready AI with Google Cloud program.

The key insight: evaluation isn't a final step but an integrated practice that transforms experimental AI into reliable business tools.

🔗 Read the full guide on Google Cloud

Stay in Rhythm

Subscribe for insights that resonate • from strategic leadership to AI-fueled growth. The kind of content that makes your work thrum.

More from Thrum

Additional pieces exploring adjacent ideas